The Complete Guide to Video Review Workflows in 2026

A stage-by-stage framework covering every failure point, every tooling decision, and every scaling challenge in modern video review.

Ask any video editor, motion designer, or post-production supervisor what eats the most time on a project, and the answer is rarely the edit itself. It is the review. More specifically, it is the communication failure that wraps around the review: the email thread that branches into three sub-threads, the feedback that describes a problem without pointing to where it happens, the call that was supposed to clarify notes but instead generated a second round of notes, and the version history that exists only in somebody's head.

Video review workflows matter more now than ever. Content production volume has increased dramatically across virtually every industry. Remote and hybrid teams are the norm, not the exception. Clients expect faster turnaround. And the file formats and quality standards of modern video work are heavier, more precise, and more varied than any general communication tool was ever designed to handle.

This guide covers everything a creative team needs to build, run, and eventually scale a video review workflow that actually works. Whether you are a two-person studio handling social content or an agency running ten concurrent client productions, the principles are the same. Structure first, then tools, and always put the content at the center of the conversation.

What This Guide Covers

- What is a video review workflow? The definition, stages, and why most teams never formally build one

- Where review workflows break down: The five most common failure points that create revision cycles and eat margin

- The rise of real-time review: Why synchronized review sessions are replacing async email feedback

- Building your review process: A stage-by-stage framework you can implement this week

- Choosing review software: What to look for, what to ignore, and what questions to ask

- Scaling as your team grows: How the right process holds up when projects multiply

Chapter 1: What Is a Video Review Workflow?

A video review and approval workflow is the structured process a creative team uses to move a video from rough cut to final sign-off. It sounds simple, but in practice it involves multiple people with different roles, varying levels of technical fluency, different time zones, and conflicting schedules, all trying to agree on something subjective.

The workflow exists to bring order to that process. Done well, it gives every stakeholder a clear role, anchors feedback to specific moments in the content, tracks changes across versions, and produces a single source of truth about what was changed, why, and when it was approved.

Done poorly — or not done at all — the workflow is whatever happens to work on a given project. A Drive folder here, an email thread there, a Slack message at midnight asking whether v4 or v5 is the one going out in the morning.

The Core Stages of a Video Review Workflow

Most video projects move through five identifiable review stages, even if teams do not always name them explicitly.

- Internal rough cut review: The first cut never goes to the client. It goes to your own team. This stage catches obvious problems before they become client problems — pacing that does not land, sequences that do not make sense, audio issues, technical problems. Keep this tight with two or three internal reviewers, a short turnaround, and clear instructions on what to look for.

- Director or creative lead review: Before moving to client-facing rounds, someone with final creative authority should sign off on direction. This ensures the work reflects the brief before you invite the client into the process.

- First client review: The first version the client sees sets the tone for the entire relationship. Share something close enough to judge direction, but frame expectations clearly about what is finished and what is not. This is where you want frame-accurate feedback tools, not email.

- Revision rounds: Based on client feedback, the editor makes changes and submits for another review. The number of rounds should be defined in the project brief. Unlimited revision cycles are a business model problem, not a workflow problem.

- Final approval and delivery: The signed-off version is locked, exported in the required formats, and delivered to the client. A proper workflow captures the written approval at this stage, not just a verbal one.

Each stage should have a clear owner, a defined exit condition, and a place where feedback lives. Without these three things, stages blur into each other and projects get stuck.

Chapter 2: Where Video Review Workflows Break Down

Most creative teams do not have a review workflow problem. They have five smaller problems that compound each other until the whole thing collapses under the weight of a big project.

Problem 1: Feedback Is Disconnected from the Content

This is the root cause of most review friction. When a client sends notes in an email, those notes describe the video from memory. The editor then has to cross-reference the written feedback against the actual timeline to figure out what frame the client is talking about. A comment like "the transition around halfway through feels too slow" could mean frame 320 or frame 640 depending on where the client was paying attention.

Frame-accurate feedback — where comments are pinned to a specific timecode in the video itself — eliminates this problem entirely. The editor sees the comment at the exact frame it refers to. There is no interpretation required.

Problem 2: Multiple Feedback Channels

When feedback comes in through email, WhatsApp, phone calls, Slack messages, and verbal notes on calls, consolidating it into a coherent revision brief is its own project. Information gets missed. Conflicting notes go unresolved. The editor makes changes based on a partial read of the feedback and the client wonders why three of their comments were ignored.

The fix is simple in principle: one place for all feedback, non-negotiable for every stakeholder. The harder part is enforcing it when clients are used to emailing notes.

Problem 3: Version Control Lives in the File Name

"v4_FINAL_clientapproved_v2_USE_THIS.mp4" is a joke that has appeared in every creative studio's shared drive at least once. When version history lives in the file name instead of a structured system, finding the right version becomes a research project. Editors make changes to the wrong version. Clients approve something and then ask for a change that was already addressed two versions ago.

Problem 4: Client Feedback Is Reactive, Not Contextual

When someone watches a video alone and writes notes afterward, the feedback is retrospective. The video has already ended. They are working from memory and general impression rather than from a specific frame they can point to. This produces vague, impressionistic feedback that is hard to action.

When the same person is reviewing in a shared session where they can pause, rewind, and comment in real time, the quality of feedback changes significantly. They point to exactly what they mean. Questions get answered in the moment. The revision brief that comes out of a session like this is dramatically cleaner.

Problem 5: Review Tools Are Not Built for Video

General file-sharing tools like Google Drive, Dropbox, and WeTransfer were built for file delivery, not for creative review. They have no concept of a timecode. They cannot anchor a comment to a specific frame. They do not track version history in a structured way. Using them for video review is like using a hammer to tighten a screw — it technically works, but not well.

The Compounding Effect

None of these problems are fatal in isolation. A small project with a single clear-thinking client can survive email feedback and manual version control. The problem is that these five issues compound. Vague feedback leads to more revision rounds. More rounds mean more email threads. More threads mean more things fall through the cracks. By the time a team is running five concurrent productions, the patchwork falls apart completely.

Chapter 3: The Rise of Real-Time Synchronized Review

The single biggest shift in how creative teams review video over the past few years has been the move from asynchronous feedback to synchronized, real-time review sessions. Asynchronous review has always been the default: the editor exports a cut, uploads it, shares a link, the client watches it at some point, writes notes, and sends them back. The process is slow, the feedback is disconnected from the content, and the communication lag can stretch a single feedback round across several days.

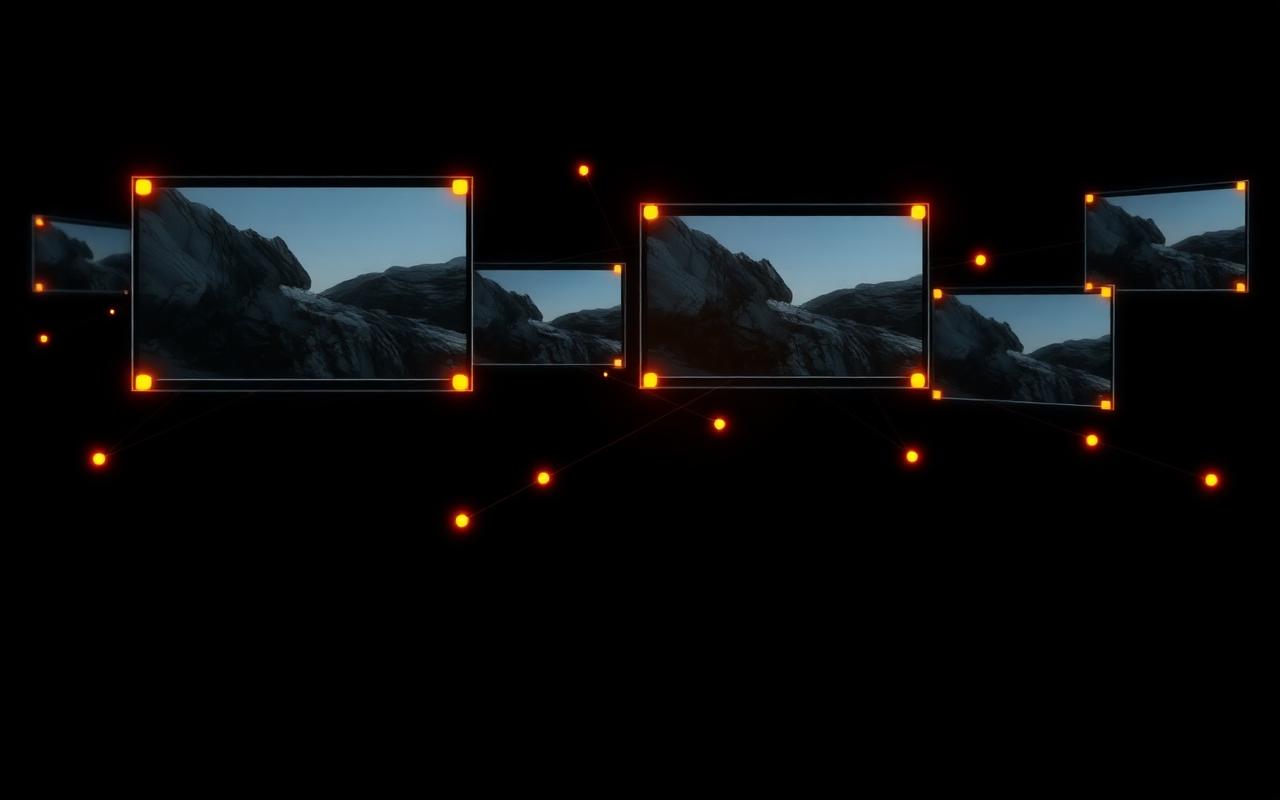

Synchronized review works differently. Every participant in the session sees the same frame at the same moment. The playback is shared. If the client pauses at frame 846, everyone in the session is at frame 846. Comments made in that moment are pinned to that exact timecode. Questions get answered immediately because everyone is looking at the same thing at the same time.

Why This Matters More Than It Sounds

The shift from asynchronous to synchronized review does not just save time. It changes the quality of the conversation. In an async review, the client is the sole interpreter of their own feedback. They write notes alone, using language that makes sense to them. The editor then has to translate those notes back into frame-level decisions, often without being able to ask follow-up questions until the next scheduled call.

In a synchronized session, the creative team can ask questions as the client is forming their thoughts. A question like "when you say the pacing feels off here, do you mean the cut timing, or the motion on the title?" — answered in real time — saves a revision round. Multiply that across five feedback moments in a single session and you have cut the revision cycle by days.

Synchronized Review vs Screen Sharing

Screen sharing over Zoom or Google Meet does allow everyone to see the same image, but it has significant limitations for professional video review. Video quality degrades over screen share compression. Frame accuracy is lost because you are watching a compressed stream rather than the original file. And there are no annotation or timecode-pinned comment tools, so feedback still ends up in the chat window or an email afterward.

A purpose-built synchronized review platform handles playback at full quality, anchors comments to specific frames, and gives all participants the same contextual view of the content. This is a fundamentally different experience from screen sharing, and it produces fundamentally different feedback.

Chapter 4: Building a Video Review Workflow That Scales

The best review workflows are not complicated. They are deliberate. The difference between a team that scales smoothly and one that drowns in revision chaos is usually not the tools they use. It is whether they have made explicit decisions about the things most teams leave to chance.

Step 1: Define Your Stages Before the Project Starts

Every project should begin with a documented review structure. How many client-facing rounds are included? Who has approval authority on the client side? What is the turnaround expectation for each round? What is the process for feedback that arrives outside the defined channels? These questions feel bureaucratic until the first project where nobody agreed on the answers. Then they feel essential.

Step 2: Assign One Owner per Stage

Every review stage should have a single person responsible for moving it forward. Not a committee. Not shared ownership. One person who knows it is their job to collect feedback, resolve conflicts, and advance the project to the next stage. On the client side, this means asking your point of contact to consolidate feedback from multiple internal stakeholders before submitting it. Consolidated feedback is actionable feedback.

Step 3: One Platform, Non-Negotiable

Choose a review platform and make it the only place feedback lives. Not one place per stakeholder — one place per project. When a client emails notes anyway, do not reply to the email. Log the notes in the review platform and acknowledge in the email that you have captured them there. When clients learn that notes submitted in the review tool get actioned faster than notes sent by email, they stop sending emails.

Step 4: Keep Internal Review Separate from Client Review

Your team should be able to have honest conversations about a project that the client does not see. Purpose-built review tools allow you to mark versions as internal-only, with separate comment threads for team discussion versus client-visible feedback. This preserves the team's ability to be honest with each other without filtering every thought for a client audience.

Step 5: Use Frame-Accurate, Timecoded Feedback

This is the single most impactful operational decision a creative team can make. If your feedback process does not anchor comments to specific timecodes in the video, every piece of feedback requires an interpretation step before it can be actioned. That interpretation step is where miscommunication lives. Frame-accurate commenting eliminates the interpretation step. The comment is in the frame. The editor sees it in the frame. There is no ambiguity about what the note refers to.

Chapter 5: Choosing the Right Video Review Software

The market for video review and online proofing tools has grown significantly over the past few years. This is good news for creative teams, but it also means there is more to evaluate. Not all platforms are built the same, and choosing the wrong one means months of friction before the team migrates again.

What Actually Matters

The most important capability is frame-accurate commenting. Comments should be pinned to a specific timecode, not floating in a side panel with no reference to where in the video they apply. Without this, you have not solved the core problem of video review.

Version control is the second critical feature. The platform should maintain a structured history of every version reviewed, with the ability to compare versions and see which comments apply to which cut. Manual version control in file names is a guarantee of confusion at scale.

Access for external reviewers is the third consideration. Clients should be able to review and comment without needing to create an account, pay for a seat, or download software. Any friction in the client-facing experience means feedback will start arriving by email again.

Synchronized playback is the fourth feature, and the one that separates platforms built for professional creative teams from general-purpose proofing tools. The ability to run a live review session where all participants see the same frame simultaneously compresses feedback cycles and produces cleaner revision briefs.

Questions to Ask When Evaluating Video Review Tools

- Can clients leave comments without creating an account or paying for a seat?

- Are comments anchored to specific timecodes, or do they float independently of the timeline?

- Does the platform support synchronized, real-time review sessions?

- How does the platform handle version history? Can you compare v3 and v5 side by side?

- What file formats are supported? Does it handle the formats your team actually works with?

- Does it integrate with the editing and production tools your team already uses?

- What does the client-facing experience look like? Is it simple enough for a non-technical stakeholder to use without guidance?

ReviewRoom: Synchronized Review Built for Creative Teams

ReviewRoom is a purpose-built video review platform designed around the synchronized session model. The core workflow is straightforward: upload the cut, invite participants, start a session. When the session is live, every participant sees the same frame simultaneously. Comments are pinned to specific timecodes. Feedback accumulates in context, on the content, in real time.

The platform handles the file formats creative teams actually work with, not just web-optimized video. Client access is frictionless, with no account creation required for reviewers. Version history is structured and accessible across the project lifecycle. For teams that have spent years managing video feedback through email threads and ad-hoc calls, the shift to synchronized review feels less like a software switch and more like a workflow upgrade.

Chapter 6: Scaling Your Review Workflow

A workflow that works for two people and one project will not automatically work for eight people and ten projects. Scaling a review workflow requires the same deliberate decisions that built the original process, applied at a larger scope.

Standardize the Template, Not the Creative

Every project should start from the same review structure template: defined stages, named owners, turnaround expectations, feedback channel rules. The creative work is unique to each project. The process surrounding it does not need to be. Teams that build a standard project template can onboard new team members and new clients faster, because both groups are entering a process that behaves predictably.

Train Clients, Not Just Your Team

Client behavior is a workflow input. If your clients consistently send feedback in formats that are hard to action, the problem is not the clients. It is the absence of a brief onboarding process that sets expectations before the first review round begins. A short, clear client guide — one page or less — explaining how the review process works, where to submit feedback, and what turnaround looks like, can eliminate most of the behavioral friction that slows projects down.

Track What Is Slowing You Down

Once a review workflow is running, the data it generates is useful. How many rounds of revision does an average project take? Which stage takes the longest? Which clients tend to produce the most actionable feedback versus the most vague feedback? Teams that look at these patterns can identify the specific points in their workflow that need attention and address them before the next similar project starts.

Know When to Expand the Toolset

A single review platform handles the core of the workflow. As teams scale, they sometimes need to connect that platform to a broader project management system, a digital asset management tool, or a client communication hub. The key is to add tools when they solve a real problem, not because they are available. Every tool in the stack should have a clear, specific job. When tools start overlapping in function, the team starts having to make decisions about which one to use — which is exactly the kind of friction a good workflow is supposed to eliminate.

Conclusion: Structure Is the Competitive Advantage

The creative industry is not short of talented editors, skilled motion designers, or strong post-production houses. What separates the studios that grow from the ones that stagnate is rarely the quality of the work. It is the quality of the process around the work.

A well-structured video review workflow is not glamorous. It does not show up in the reel. But it is the difference between a team that delivers predictably and one that scrambles on every project. It is the difference between a client relationship that grows and one that erodes under the friction of repeated miscommunication.

The tools available to creative teams in 2026 are better than they have ever been. Synchronized review, frame-accurate commenting, structured version control, and frictionless client access are no longer enterprise-only features. They are accessible to studios of any size.

The teams that build a deliberate review workflow now will be able to scale with confidence later. The ones that keep patching the email thread will keep hitting the same ceiling.

Start building a review workflow that scales at reviewroom.studio — free to start, no credit card required.

Try ReviewRoom free

Share secure, password-protected playlists with your clients today.

Start Collaborating Free